I've shown this plot a couple of times already, but here it is again, with another that shows the bar we have to limbo under to win $3 million.

Anyone think this is possible?

Any predictions of what the final winning error will be?

I predict 0.453

Place your bets here...

Monday, 26 December 2011

Saturday, 17 December 2011

What's Going On Here

In many of the analytics problems I have been involved in, the problem you end up dealing with is not the one you initially were briefed to solve.

These new problems are always discovered by visualising the data in some way and spotting curious patterns.

Here are a three of examples...

1. Algorithmic Trading Challenge

The Algorithmic Trading Challenge is based on data from the London Stock Exchange and is about things called 'Liquidity Shocks'. I know nothing about these but we had data, so the first thing I did was plot a few graphs to see id I could get a better understanding of things.

The plot below shows the times these 'Liquidity Shocks' occur.

Now it is quite clear there is something going on at 1pm, 2:30pm, after 3:30pm and at 4pm.

Interestingly these spikes are only evident when all commodities are looked at together, they are not as obvious in any individual commodity.

My first question if I was solving a business problem would be to return to the business to get more insight in what was going on here. My initial thoughts were lunch breaks and the opening times of other Stock Exchanges around the world - as 3:30pm London time could be around opening time in New York.

Understanding the cause of these peaks is important as you would expect the reaction to them (the problem to solve) to be a function of the cause.

If we did discover it was the opening times of other exchanges, then I would ask for extra information like the specific dates, so I could calculate when these peaks would occur in the future when the clocks changed. We do not have this information at the current time, or even the day of the week (it can be inferred but not accurately as there will be public holidays when the exchanges are closed)

As it stands any models built could potentially fail on the leaderboard (or real life) data as our model might think 2:30pm is a special time, wheras really it is when another exchange opens, or when people come back from lunch. We need this causal information rather than just dealing with the effect - time differences change - lunch breaks may change.

The current competition data is potentially lacking the full information required to build a model that is as robust as possible over time.

2. Interesting Distributions

One of the first things I do when receiving a data set is to scan the distributions of all variables to sanity check them for anything that looks out of place - but still things can sneak past you.

The following is exam mark data in the range 0-100. If we bin it in 20 bins then things look reasonable, but if we zoom in then we get the 'what is going on here' question again. It is quite clear what is going on, but if exam marks is the thing we are trying to predict, how do we deal with this phenomenon and how would our algorithm cope looking at it blindly? And what if the pass mark changed or rules changes - the algorithm would fail. Again, we need to be aware of the underlying root cause and not just the effect.

3. Don't Get Kicked

This is another Kaggle Competition...

Kicked cars often result when there are tampered odometers, mechanical issues the dealer is not able to address, issues with getting the vehicle title from the seller, or some other unforeseen problem. Kick cars can be very costly to dealers after transportation cost, throw-away repair work, and market losses in reselling the vehicle.

Modelers who can figure out which cars have a higher risk of being kick can provide real value to dealerships trying to provide the best inventory selection possible to their customers.

The challenge of this competition is to predict if the car purchased at the Auction is a Kick (bad buy)

This is a binary classification task and a quick way to spot data issues with this type of problem is to throw it in a decision tree in order to spot what are called 'gimmees'. These are cases that are easily perfectly predictable and are more than often a result of giving prediction data that just shouldn't be there as it is not known at the time (future information) - an extraction issue that would result in a useless model (It is common that people think they have built really good predictive models using future information without really questioning why their models are so good!).

Another reason 'gimmees' occur are poorly defined target variables, that is not excluding certain cases (and example in target marketing would be not excluding dead people from your mailing list and then predicting they won't respond to your offer!)

After a bit of data prep I threw the Don't Get Kicked Data into a Tiberius Decision Tree - the visual below immediately tells me there are clear cut cases of cars that will be kicked - it is almost black and white.

These 'gimmees' can be described by the rules...

[WheelTypeID] = 'NULL' AND [Auction] <> 'MANHEIM'

MANHEIM is an auctioneering company where cars are auctioned - there are 2 main auctioneers in the data set plus 'other'.

Having worked extensively with car auction data before I know that there are certain auctions where only 'write off' cars are sold, that is those that are sold for scrap because they have been in accidents. I also know that different auction houses will record data differently.

The above simple rule easily identifies cars that are more than likely going to be 'knocked' - but this is probably because they are 'knocked' in the first place (are we saying that someone in a coma is more likely to die). Is this useful? Is this a poorly defined definition of what is 'knocked'? Why does a missing value for WheelTypeID make such a big difference between auction houses?

A bit more digging reveals location and the specific buyer drills down on these gimmees even more...

[WheelTypeID] = 'NULL' AND [Auction] <> 'MANHEIM' AND [VNST] in ('NC','AZ') AND [BYRNO] NOT IN (99750,99761)

and after excluding these 'gimmees' it becomes clear there are certain buyers that just don't but knocked cars, especially 99750 and 99761...

byrno = 99750 and VNST in ('SC','NC','UT','ID','PA','WV','MO','WA')

byrno = 99761 and Auction = 'MANHEIM'

byrno = 99761 and MAKE = 'SUZUKI'

byrno = 99761 and SIZE = 'VAN'

byrno = 99761 and VNST IN ('FL','VA')

Now is this actually useful?

The challenge of this competition is to predict if the car purchased at the Auction is a Kick (bad buy)

The model is going to focus on who bought the car rather than the characteristics of the car itself. What happens if buyers suddenly change their policy? Wouldn't we rather just go and speak to these buyers to understand what their policy is and hence get some business understanding? Why is specific auction house location so important? Is it because of the specific auction house itself or that specific cars are actually routed to specific places (this does happen).

Basically if this was a real client engagement I would be going back to them with a lot of questions to help me understand the data better so it can be used in a way that is going to be useful to them.

In Summary

When doing predictive modelling, you can throw the latest hot algorithm at a problem such as a GBM, Neural Net or Random Forest and get impressive results, but unless you thoroughly understand and account for the real dynamics of what is going on then the models could disastrously fail when these dynamics change. I find visualisation the key to spotting and interpreting these dynamics - which is why I would rather have a good data miner who knows what he is doing using free software over a poor data miner with the most expensive software - see http://analystfirst.com/analyst-first-101/

These new problems are always discovered by visualising the data in some way and spotting curious patterns.

Here are a three of examples...

1. Algorithmic Trading Challenge

The Algorithmic Trading Challenge is based on data from the London Stock Exchange and is about things called 'Liquidity Shocks'. I know nothing about these but we had data, so the first thing I did was plot a few graphs to see id I could get a better understanding of things.

The plot below shows the times these 'Liquidity Shocks' occur.

Now it is quite clear there is something going on at 1pm, 2:30pm, after 3:30pm and at 4pm.

Interestingly these spikes are only evident when all commodities are looked at together, they are not as obvious in any individual commodity.

My first question if I was solving a business problem would be to return to the business to get more insight in what was going on here. My initial thoughts were lunch breaks and the opening times of other Stock Exchanges around the world - as 3:30pm London time could be around opening time in New York.

Understanding the cause of these peaks is important as you would expect the reaction to them (the problem to solve) to be a function of the cause.

If we did discover it was the opening times of other exchanges, then I would ask for extra information like the specific dates, so I could calculate when these peaks would occur in the future when the clocks changed. We do not have this information at the current time, or even the day of the week (it can be inferred but not accurately as there will be public holidays when the exchanges are closed)

As it stands any models built could potentially fail on the leaderboard (or real life) data as our model might think 2:30pm is a special time, wheras really it is when another exchange opens, or when people come back from lunch. We need this causal information rather than just dealing with the effect - time differences change - lunch breaks may change.

The current competition data is potentially lacking the full information required to build a model that is as robust as possible over time.

2. Interesting Distributions

One of the first things I do when receiving a data set is to scan the distributions of all variables to sanity check them for anything that looks out of place - but still things can sneak past you.

The following is exam mark data in the range 0-100. If we bin it in 20 bins then things look reasonable, but if we zoom in then we get the 'what is going on here' question again. It is quite clear what is going on, but if exam marks is the thing we are trying to predict, how do we deal with this phenomenon and how would our algorithm cope looking at it blindly? And what if the pass mark changed or rules changes - the algorithm would fail. Again, we need to be aware of the underlying root cause and not just the effect.

3. Don't Get Kicked

This is another Kaggle Competition...

Kicked cars often result when there are tampered odometers, mechanical issues the dealer is not able to address, issues with getting the vehicle title from the seller, or some other unforeseen problem. Kick cars can be very costly to dealers after transportation cost, throw-away repair work, and market losses in reselling the vehicle.

Modelers who can figure out which cars have a higher risk of being kick can provide real value to dealerships trying to provide the best inventory selection possible to their customers.

The challenge of this competition is to predict if the car purchased at the Auction is a Kick (bad buy)

This is a binary classification task and a quick way to spot data issues with this type of problem is to throw it in a decision tree in order to spot what are called 'gimmees'. These are cases that are easily perfectly predictable and are more than often a result of giving prediction data that just shouldn't be there as it is not known at the time (future information) - an extraction issue that would result in a useless model (It is common that people think they have built really good predictive models using future information without really questioning why their models are so good!).

Another reason 'gimmees' occur are poorly defined target variables, that is not excluding certain cases (and example in target marketing would be not excluding dead people from your mailing list and then predicting they won't respond to your offer!)

After a bit of data prep I threw the Don't Get Kicked Data into a Tiberius Decision Tree - the visual below immediately tells me there are clear cut cases of cars that will be kicked - it is almost black and white.

These 'gimmees' can be described by the rules...

[WheelTypeID] = 'NULL' AND [Auction] <> 'MANHEIM'

MANHEIM is an auctioneering company where cars are auctioned - there are 2 main auctioneers in the data set plus 'other'.

Having worked extensively with car auction data before I know that there are certain auctions where only 'write off' cars are sold, that is those that are sold for scrap because they have been in accidents. I also know that different auction houses will record data differently.

The above simple rule easily identifies cars that are more than likely going to be 'knocked' - but this is probably because they are 'knocked' in the first place (are we saying that someone in a coma is more likely to die). Is this useful? Is this a poorly defined definition of what is 'knocked'? Why does a missing value for WheelTypeID make such a big difference between auction houses?

A bit more digging reveals location and the specific buyer drills down on these gimmees even more...

[WheelTypeID] = 'NULL' AND [Auction] <> 'MANHEIM' AND [VNST] in ('NC','AZ') AND [BYRNO] NOT IN (99750,99761)

and after excluding these 'gimmees' it becomes clear there are certain buyers that just don't but knocked cars, especially 99750 and 99761...

byrno = 99750 and VNST in ('SC','NC','UT','ID','PA','WV','MO','WA')

byrno = 99761 and Auction = 'MANHEIM'

byrno = 99761 and MAKE = 'SUZUKI'

byrno = 99761 and SIZE = 'VAN'

byrno = 99761 and VNST IN ('FL','VA')

Now is this actually useful?

The challenge of this competition is to predict if the car purchased at the Auction is a Kick (bad buy)

The model is going to focus on who bought the car rather than the characteristics of the car itself. What happens if buyers suddenly change their policy? Wouldn't we rather just go and speak to these buyers to understand what their policy is and hence get some business understanding? Why is specific auction house location so important? Is it because of the specific auction house itself or that specific cars are actually routed to specific places (this does happen).

Basically if this was a real client engagement I would be going back to them with a lot of questions to help me understand the data better so it can be used in a way that is going to be useful to them.

In Summary

When doing predictive modelling, you can throw the latest hot algorithm at a problem such as a GBM, Neural Net or Random Forest and get impressive results, but unless you thoroughly understand and account for the real dynamics of what is going on then the models could disastrously fail when these dynamics change. I find visualisation the key to spotting and interpreting these dynamics - which is why I would rather have a good data miner who knows what he is doing using free software over a poor data miner with the most expensive software - see http://analystfirst.com/analyst-first-101/

Friday, 16 December 2011

Two Become One

In the previous post I looked at the HHP leaderboard and discovered some interesting patterns regarding certain teams.

It looks as the evidence proved out to be true, with SD_John and Lily now all of a sudden merging into a single team.

Interestingly they have also been in other competitions with very similar results.

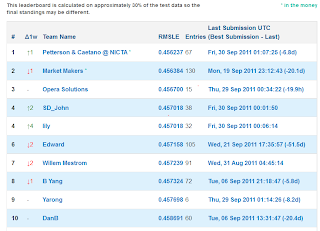

This was the final standing in the Give Me Some Credit competiton,

What is actually more interesting here is the demonstration of overfitting to the leaderboard. Opera Solutions & JYL are more than likely working together and we know Lily & SD_John are working together. If you look at the leaderboard just before the competition ended (on the 30%) you will see Opera near the top but the final position on the 70% was much worse. Similarly a few others found that relying on the leaderboard as an indication of the final position can be misplaced trust.

If you followed the competition forum, you will see team VSU also had multiple accounts for the same person, and they seem to have also fallen into the same trap of overfitting to the leaderboard - they ended up 9th on the 70% when they were first on the 30%.

The data mining lesson here is that you need to take all necessary steps to avoid overfitting, rather than just relying on the leaderboard feedback.

Congratulations to Nathaniel, Eu Jin (small world - I used to work with Nathaniel at the National Australia Bank and regularly see Eu Jin at the Melbourne R user group) and Alec, who clearly did not overfit. A Perfect Storm!

It looks as the evidence proved out to be true, with SD_John and Lily now all of a sudden merging into a single team.

Interestingly they have also been in other competitions with very similar results.

This was the final standing in the Give Me Some Credit competiton,

What is actually more interesting here is the demonstration of overfitting to the leaderboard. Opera Solutions & JYL are more than likely working together and we know Lily & SD_John are working together. If you look at the leaderboard just before the competition ended (on the 30%) you will see Opera near the top but the final position on the 70% was much worse. Similarly a few others found that relying on the leaderboard as an indication of the final position can be misplaced trust.

If you followed the competition forum, you will see team VSU also had multiple accounts for the same person, and they seem to have also fallen into the same trap of overfitting to the leaderboard - they ended up 9th on the 70% when they were first on the 30%.

The data mining lesson here is that you need to take all necessary steps to avoid overfitting, rather than just relying on the leaderboard feedback.

Congratulations to Nathaniel, Eu Jin (small world - I used to work with Nathaniel at the National Australia Bank and regularly see Eu Jin at the Melbourne R user group) and Alec, who clearly did not overfit. A Perfect Storm!

Wednesday, 14 December 2011

Phantom of the Opera

There have been some recent announcements on Kaggle reminding competitors about the rules regarding teams and that a single person can't have muliple accounts in order to get around the daily submission limit.

I used the HHP leaderboard as an interesting data source to educate myself on the data manipulation capabilities in R and it became very evident that there was some curious behaviour going on.

From a data scientist viewpoint, this demonstrates the power of the human eye in picking up things that will give you the insight that an algorithm won't. In most (probably all) of my professional projects the important data issues and findings have been a result of looking at visualisations of the data and asking the question "what's going on here!".

The first curiosity on the leaderboard was by trying to discover if the competition was attracting new entrants by looking at the dates of the first submissions of entrants. The two plots below show different ways of looking at the same data. What is obvious is that the 29th Nov had an unusual number of new entrants.

and looked at in another way...

What's going on here?

If you look at the team name of the entrants it is clear that all these accounts are somewhat connected - so no real mystery as to the cause of the blip for this date.

"accnt002" "accnt003" "accnt004" "accnt005" "accnt006"

"accnt007" "accnt008" "accnt009" "cyclops" "Faber"

"Farbe" "Fortis" "glad5" "glad55" "gladiator"

"gladiator1" "gladiator2" "gladiator3" "jackie" "Kaggleacctk"

"KaggleK2" "sashik"

The next two plots show the scores of the first submission of teams.

What's going on here?

The common scores where the steps are seen are the all zeros benchmark, optimised constant benchmark and the code we posted in our writeup - so this is explained. There is another common first score which is another very simple model that many teams independently thought of.

What does raise an eyebrow from the cumulative plot is one team stands out as having a very impressive first score. This is team YARONG who posted a very impressive model of 0.457698 on the first attempt and it still remains their best score 22 attempts later. This is possible (you don't need to submit models to blend them if you have your own holdout set - see the IBM writeup in the KDD Cup Orange Challenge) but somewhat unlikely as we know from the writeups that an individual model will get you no where near this score.

If you look at the dates teams submit and look at some sort of correlation of entry dates, one team appears twice towards the top - SD_John, and they are also at the top of the leaderboard.

td.row td.col pairs correl

UCI-CS273A-RegAll Alex_Tot 27 0.9979902

rutgers HappyAcura 29 0.9978254

SD_John lily 34 0.9974190

Roger99 Krakozjabra 21 0.9956643

SD_John JYL 24 0.9950884

The_Cuckoo's_Nest NumberNinja 23 0.9931073

NumberNinja Chris_R 29 0.9924864

What's is going on here?

If you plot the submissions and scores you will see SD_John and Lily seem to perfectly track each other in both the days they submit, the times they submit and the scores they get.

And on one particular day they get exactly the same score within 5 minutes of each other...

SD_John and JYL seem to also track each other in submission dates. Interestingly JYL has a very similar profile to a member of Opera, and a little digging would suggest this is one and the same person.

So here we can hypothesize that SD_john, lily, JYL and Opera (and evidence also suggests many more teams) are collaborating in some way.

Interesting - all from following your nose, which is what good data mining is all about.

In conclusion, the top of the leaderboard is not really what it appears to be - which I hope will encourage others to keep trying.

The main reason for this investigation was to help me discover what R can do to manipulate data - and the answer is basically anything you want it to do. You first have to know what you want to achieve then do some Googling and you will find some code to help you somewhere.

I used the HHP leaderboard as an interesting data source to educate myself on the data manipulation capabilities in R and it became very evident that there was some curious behaviour going on.

From a data scientist viewpoint, this demonstrates the power of the human eye in picking up things that will give you the insight that an algorithm won't. In most (probably all) of my professional projects the important data issues and findings have been a result of looking at visualisations of the data and asking the question "what's going on here!".

The first curiosity on the leaderboard was by trying to discover if the competition was attracting new entrants by looking at the dates of the first submissions of entrants. The two plots below show different ways of looking at the same data. What is obvious is that the 29th Nov had an unusual number of new entrants.

and looked at in another way...

What's going on here?

If you look at the team name of the entrants it is clear that all these accounts are somewhat connected - so no real mystery as to the cause of the blip for this date.

"accnt002" "accnt003" "accnt004" "accnt005" "accnt006"

"accnt007" "accnt008" "accnt009" "cyclops" "Faber"

"Farbe" "Fortis" "glad5" "glad55" "gladiator"

"gladiator1" "gladiator2" "gladiator3" "jackie" "Kaggleacctk"

"KaggleK2" "sashik"

The next two plots show the scores of the first submission of teams.

What's going on here?

The common scores where the steps are seen are the all zeros benchmark, optimised constant benchmark and the code we posted in our writeup - so this is explained. There is another common first score which is another very simple model that many teams independently thought of.

What does raise an eyebrow from the cumulative plot is one team stands out as having a very impressive first score. This is team YARONG who posted a very impressive model of 0.457698 on the first attempt and it still remains their best score 22 attempts later. This is possible (you don't need to submit models to blend them if you have your own holdout set - see the IBM writeup in the KDD Cup Orange Challenge) but somewhat unlikely as we know from the writeups that an individual model will get you no where near this score.

If you look at the dates teams submit and look at some sort of correlation of entry dates, one team appears twice towards the top - SD_John, and they are also at the top of the leaderboard.

td.row td.col pairs correl

UCI-CS273A-RegAll Alex_Tot 27 0.9979902

rutgers HappyAcura 29 0.9978254

SD_John lily 34 0.9974190

Roger99 Krakozjabra 21 0.9956643

SD_John JYL 24 0.9950884

The_Cuckoo's_Nest NumberNinja 23 0.9931073

NumberNinja Chris_R 29 0.9924864

What's is going on here?

If you plot the submissions and scores you will see SD_John and Lily seem to perfectly track each other in both the days they submit, the times they submit and the scores they get.

And on one particular day they get exactly the same score within 5 minutes of each other...

SD_John and JYL seem to also track each other in submission dates. Interestingly JYL has a very similar profile to a member of Opera, and a little digging would suggest this is one and the same person.

So here we can hypothesize that SD_john, lily, JYL and Opera (and evidence also suggests many more teams) are collaborating in some way.

Interesting - all from following your nose, which is what good data mining is all about.

In conclusion, the top of the leaderboard is not really what it appears to be - which I hope will encourage others to keep trying.

The main reason for this investigation was to help me discover what R can do to manipulate data - and the answer is basically anything you want it to do. You first have to know what you want to achieve then do some Googling and you will find some code to help you somewhere.

Subscribe to:

Comments (Atom)